Back in February 2015 I was lucky enough to present a paper at a workshop in Durham organised by Louise Amoore and Volha Piotukh from the Durham University Geography Department. Thinking with Algorithms: Cognition and Computation in the work of N. Katherine Hayles (which featured Hayles as a guest and keynote speaker), was an incredibly useful forum in which to present the first conference draft of my Language in the Age of Algorithmic Reproduction paper. As well as some very welcome positive comments, I also had some incisive, critical feedback which – quite rightly – highlighted my selective use of the Walter Benjamin essay. I had indeed conveniently skirted around the issue of the fascist aestheticisation of politics, which forms much of the second half of the essay, and have devoted much time over the last few months trying to find an answer to Martin Coward’s post-panel question. To be honest, I’m still pondering…

Back in February 2015 I was lucky enough to present a paper at a workshop in Durham organised by Louise Amoore and Volha Piotukh from the Durham University Geography Department. Thinking with Algorithms: Cognition and Computation in the work of N. Katherine Hayles (which featured Hayles as a guest and keynote speaker), was an incredibly useful forum in which to present the first conference draft of my Language in the Age of Algorithmic Reproduction paper. As well as some very welcome positive comments, I also had some incisive, critical feedback which – quite rightly – highlighted my selective use of the Walter Benjamin essay. I had indeed conveniently skirted around the issue of the fascist aestheticisation of politics, which forms much of the second half of the essay, and have devoted much time over the last few months trying to find an answer to Martin Coward’s post-panel question. To be honest, I’m still pondering…

This was the abstract for the paper I presented:

Language in the Age of Algorithmic Reproduction

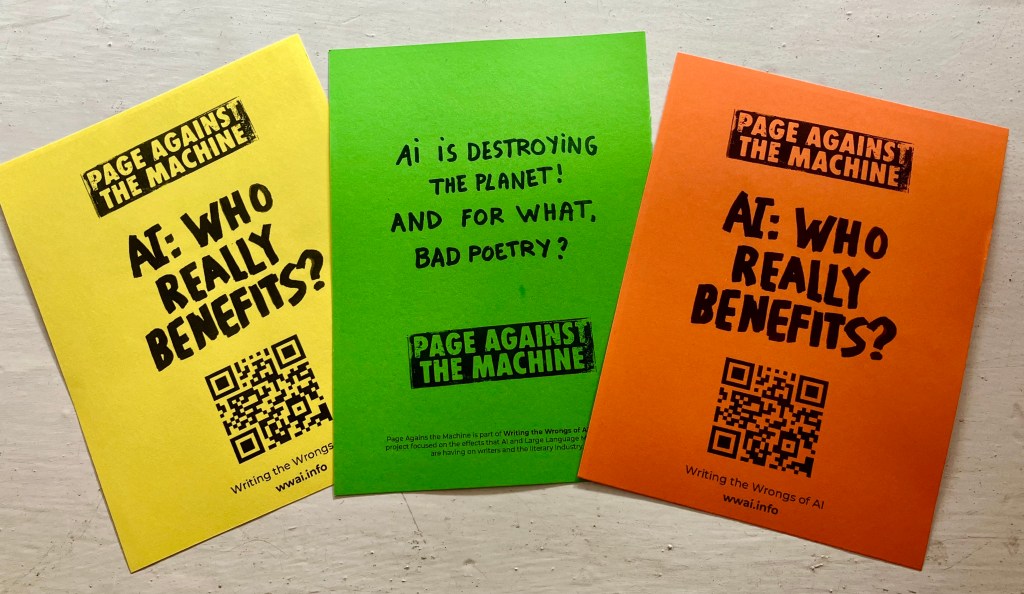

This paper is a slightly experimental reworking of Walter Benjamin’s seminal essay The Work of Art in the Age of Mechanical Reproduction (1936), and seeks to examine what happens to writing, language and meaning when processed by algorithm, and in particular, when reproduced through search engines such as Google. Reflecting both the political and economic frame through which Benjamin examined the work of art, as mechanical reproduction abstracted it further and further away from its original ‘essence’, the processing of language through the search engine is similarly based on the distancing and decontextualization of ‘natural’ language from its source. While all algorithms are necessarily tainted with the residue of their creators, the data on which search algorithms can work is also not necessarily geographically or socially representative and can be ‘disciplined’ (Kitchin & Dodge, 2011) by encoding and categorisation, meaning that what comes out of the search engine is not necessarily an accurate (or entirely innocent) reflection of ‘society’. Added to, and inseparable from these technologically influencing factors, is the underlying and pervasive power of commerce and advertising. When a search engine is fundamentally linked to the market, the words on and through which it acts become commodities, stripped of material meaning, and moulded by the potentially corrupting and linguistically irreverent laws of ‘semantic capitalism’ (Feuz, Fuller & Stalder, 2011), and “by third parties in the pursuit of gain” (Benjamin). With the now near total ubiquity of the search engine (and particularly monopoly holders Google) as a means of extracting information in linguistic form, the algorithms which return search results and auto-predict our thoughts have a uniquely powerful and exponentially increasing agency in the production of knowledge. So as “writing yields to flickering signifiers underwritten by binary digits” (Hayles, 1999), this paper will question what is gained and what is lost when we entrust language, knowledge and the interpretation of meaning to search engines, and will suggest that the algorithmic processing of data based on contingent input, commercial bias and unregulated black-boxed technologies is not only reducing and recoding natural language, but that this ‘reconstruction’ of language has far reaching societal and political consequences, re-introducing underlying binaries of power to both people and places. Just as mechanical reproduction ‘emancipated’ art from its purely ritualistic function, the algorithmic reproduction of language is an overtly political process.