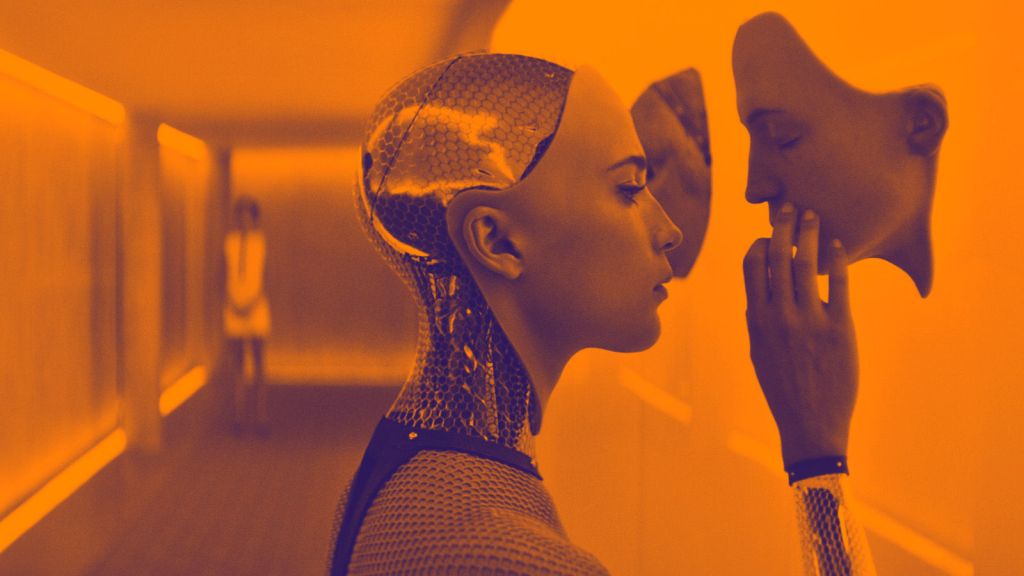

Earlier this week I curated and co-hosted Passengerfilms’ latest event in London (quite aptly within a stone’s throw of Silicon Roundabout). Called BEING HUMAN // HUMAN BEING, the event featured a screening and discussion of Alex Garland’s 2015 film Ex Machina. The fact that we sold out before we even started advertising I think goes to show not only what an awesome panel we had in Lee MacKinnon, John Danaher and Oli Mould, but that the possibilities, ethics and potential dangers of Artificial Intelligence really are at the forefront not only of academic debate, but also of a wider public imagination.

Ex Machina is such an incredibly rich and provocative film that it was impossible to cover everything in one short night, so I wanted to write a few thoughts down here, some of which were raised on the night, but others for which there was no time, or of which I’ve only just thought…

The Frankenstein Angle

The more I think about the film, the deeper, and more sinister the Frankenstein analogy becomes. I’ve called this post The Monster That ‘Google’ Created, by which I mean that the AI that is Ava is quite literally constructed out of search data – presumably the questions we put into Google (the thinly disguised BlueBook in the film), as well as the answers we get out. As I mentioned on the night, I think this premise is flawed in that if you really did build an AI using a mass harvesting of search data, then it would be impossible to remove the commercial element from this data, and your AI would constantly be trying to sell you something you looked up months ago. As has been reported recently, Microsoft’s AI Twitter bot turned into a Hitler loving sex robot in a matter of hours, which for me illustrates how competing influences will always underpin, problematize and corrupt ‘live’ web data, whether it’s financial, political or social media capital being sought. But this aside, what’s really interesting is the comparison with how Frankenstein’s monster gains its intelligence. Not only does Mary Shelley’s creation spend months spying on a family through a hole in the wall from its hiding place, and therefore witnesses their daily interactions and conversations, but it reads books. And not just any books, but Milton’s Paradise Lost, Plutarch’s Lives of Illustrious Greeks and Romans, and Goethe’s Sorrows of Werter, carefully selected by Shelley to enable the monster to understand and ‘feel’ sympathy, love and anguish, the difference between good and evil, and to question the wretchedness of its creation and existence. Quite simply, from listening and reading, it learns to be human, with all the crushed hopes and heartbreak which that entails. In contrast, Ava has learned from the constructed, virtual, and unreliable ‘reality’ of linguistic search data. But however ‘intelligent’ or semantically aware a search engine can be, the language which flows through it is little more than a binary reduced, decontextualized jumble of words, reconstructed into recognisable language not by any laws of nature or ethics, but by mathematics and the laws of the market. You can’t replace theory with (big) data, and you can’t replace being human with it either.

And that is why, while I always feel hopelessly sorry for Frankenstein’s monster, I don’t share the sympathy or allegiance towards Ava which many (including Alex Garland himself) feel. She was offered kindness (in the form of Caleb), but chose to reject it. Frankenstein’s monster wanted nothing more than to be loved, but is rejected by society because of its appearance, and only then turns nasty. In Ex Machina, Ava’s desire to love and be loved is nothing but a ploy, which is arguably a ‘human’ trait too, but I think the desire to be loved and helped is perhaps a more basic one, and for me that is why Ava doesn’t pass the Turing Test.

Ava’s dress sense

I am aware that I’ve been calling Frankenstein’s monster ‘it’, and Ava (who I may as well go ahead and call Nathan’s monster) ‘she’, even though Frankenstein’s monster is most definitely a male construction with sexual desire for a female. This may be because Frankenstein doesn’t name his monster like Nathan does, and he also doesn’t provide it with handy array of manly wardrobe staples. But despite this, Frankenstein’s monster has a far more ‘human’ sexuality than Ava does – however well the sensors Nathan has kindly built between her legs might work. And so to Ava’s clothes… Now I realise that her clothes have been picked by Nathan, who probably drunk-ordered them online, but why have Ava choose the sexy dress and high heels for her escape? That’s not empowering – if that’s what Garland was going for – it’s just plain impractical. But then again, Ava has presumably learnt what will get her furthest in life in the outside world from the combined wisdom of web searches, which could explain the 6-inch heels. More inexplicable is why have her in mumsy floral dresses, woolly tights and a cardie in the Caleb ‘test’ scenes? And what does that say about Caleb’s pornography profile, on which her looks have apparently been modelled?

Gods, Machines and Nuclear Armageddon

I touched on this on Wednesday, but just wanted to expand and clarify a little. I’m really interested in why Alex Garland dropped the ‘Deus’ from the phrase ‘Deus Ex Machina’ for the title of the film. It could be something as simple as he thought it more catchy, but I think a close reading brings up some important issues. A ‘Deus Ex Machina’ is a plot device in which a divine/random/providential intervention saves or redeems a seemingly hopeless situation. From the Latin ‘God from the machine’, it stems from ancient Greek theatre when a ‘God’ would figuratively (or even literally) descend onto the stage to miraculously rescue or resolve an implausible or floundering dramatic plot. So in terms of Ex Machina, Alex Garland might well have thought – okay, so I want to make a film about an Artificial Intelligence which looks like a lady… now how am I going to do that and make it vaguely plausible? Enter Nathan – maverick, misogynistic tech billionaire with far too much time on his hands… And there you go – there’s Ava. To me, Nathan is the plot device that enables the story. There are more than enough references to Nathan as God in the film to support this theory; the nominative similarity of Ava to Eve, for example, but also the sequence when a wide-eyed Caleb states that if Nathan has created a conscious machine ‘it’s not the history of man… that’s the history of Gods’. Nathan then deliberately misquotes Caleb to make it sound like Caleb called him (Nathan), ‘not a man, but a god’. But if Nathan is the ‘god’ in the ‘god from the machine’, then why drop the Deus from the Deus Ex Machina? Could this be because a Deus Ex Machina is a last chance plot device… if you use it up/kill it off, then there is no longer any means of controlling what happens. Nathan’s monster, constructed and fed on the linguistic mutations of a humanity mediated by commerce and corporate power, has been let loose and there is nothing we can do about it. Which is, as I see it, an astonishingly accurate and astute critique of our dependence on (and faith in) big data, and the Google ‘machine’. And where I agree with Garland (and for this I can forgive him the heels), is that artificial intelligence without ‘humanity’ is potentially disastrous. The clues are there – the track Enola Gay is innocently played over Caleb unpacking in his windowless room, and the reference to Oppenheimer and the atomic bomb dropped by the Enola Gay over Hiroshima is even more explicit. Quoting Oppenheimer (who was himself quoting the Bhagavad Gita), ‘I am become death, The Destroyer of Worlds’, Caleb muses, while surveying Nathan’s empire with a nice glass of Chardonnay. It’s scary stuff, but then I suppose divorcing technology from humanity always will be.

I touched on this on Wednesday, but just wanted to expand and clarify a little. I’m really interested in why Alex Garland dropped the ‘Deus’ from the phrase ‘Deus Ex Machina’ for the title of the film. It could be something as simple as he thought it more catchy, but I think a close reading brings up some important issues. A ‘Deus Ex Machina’ is a plot device in which a divine/random/providential intervention saves or redeems a seemingly hopeless situation. From the Latin ‘God from the machine’, it stems from ancient Greek theatre when a ‘God’ would figuratively (or even literally) descend onto the stage to miraculously rescue or resolve an implausible or floundering dramatic plot. So in terms of Ex Machina, Alex Garland might well have thought – okay, so I want to make a film about an Artificial Intelligence which looks like a lady… now how am I going to do that and make it vaguely plausible? Enter Nathan – maverick, misogynistic tech billionaire with far too much time on his hands… And there you go – there’s Ava. To me, Nathan is the plot device that enables the story. There are more than enough references to Nathan as God in the film to support this theory; the nominative similarity of Ava to Eve, for example, but also the sequence when a wide-eyed Caleb states that if Nathan has created a conscious machine ‘it’s not the history of man… that’s the history of Gods’. Nathan then deliberately misquotes Caleb to make it sound like Caleb called him (Nathan), ‘not a man, but a god’. But if Nathan is the ‘god’ in the ‘god from the machine’, then why drop the Deus from the Deus Ex Machina? Could this be because a Deus Ex Machina is a last chance plot device… if you use it up/kill it off, then there is no longer any means of controlling what happens. Nathan’s monster, constructed and fed on the linguistic mutations of a humanity mediated by commerce and corporate power, has been let loose and there is nothing we can do about it. Which is, as I see it, an astonishingly accurate and astute critique of our dependence on (and faith in) big data, and the Google ‘machine’. And where I agree with Garland (and for this I can forgive him the heels), is that artificial intelligence without ‘humanity’ is potentially disastrous. The clues are there – the track Enola Gay is innocently played over Caleb unpacking in his windowless room, and the reference to Oppenheimer and the atomic bomb dropped by the Enola Gay over Hiroshima is even more explicit. Quoting Oppenheimer (who was himself quoting the Bhagavad Gita), ‘I am become death, The Destroyer of Worlds’, Caleb muses, while surveying Nathan’s empire with a nice glass of Chardonnay. It’s scary stuff, but then I suppose divorcing technology from humanity always will be.